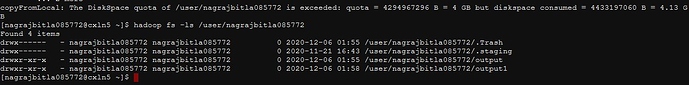

where my data is 2 GB and i am not able to laod to hdfs system in my space and faving error of disk space execeeded could you pls quickly help me to resolve the issue .

load data local inpath ‘/home/veerayyakumarg1811/parkingviolations.csv’ into table nyc_parking_violations;

Loading data to table veerugandhad.nyc_parking_violations

Failed with exception org.apache.hadoop.hdfs.protocol.DSQuotaExceededException: The DiskSpace quota of /user/veerayyakumarg1811 is exceeded: quota = 4294967296 B = 4 GB but diskspace c

onsumed = 4575774612 B = 4.26 GB

at org.apache.hadoop.hdfs.server.namenode.DirectoryWithQuotaFeature.verifyStoragespaceQuota(DirectoryWithQuotaFeature.java:211)

at org.apache.hadoop.hdfs.server.namenode.DirectoryWithQuotaFeature.verifyQuota(DirectoryWithQuotaFeature.java:239)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.verifyQuota(FSDirectory.java:1073)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.updateCount(FSDirectory.java:902)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.updateCount(FSDirectory.java:861)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.addBlock(FSDirectory.java:567)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.saveAllocatedBlock(FSNamesystem.java:3803)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.storeAllocatedBlock(FSNamesystem.java:3387)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:3268)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:850)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:504)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:640)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:982)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2351)

at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2347)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1866)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2345)

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.MoveTask